1/4 - Why AI sounds convincing, even when it's wrong | Costa Blanca

Ask AI something you know well and you'll spot it quickly. Most of the answer sounds right. Then somewhere in those clean sentences, there's something wrong. Here's why that happens every single time.

Why AI sounds convincing, even when it's wrong

Ask ChatGPT, Claude, or Google AI about something you know well. Really know well. Your own profession, your own country, your own market.

Read the answer carefully.

Most of it will seem right. Some of it will sound right. And somewhere between those polished sentences, there'll be something off. A fact that's outdated. A rule from the wrong country. A claim that sounds plausible but isn't quite true.

The problem is that it reads exactly the same as the parts that are correct.

AI doesn't think. It predicts.

Here's the simplest way to understand what's actually happening.

You know the text suggestions on your phone? You type "see you at" and it suggests "6," "the office," or "noon." Your phone isn't thinking about your schedule. It's predicting what word usually comes next, based on everything you've typed before.

AI works the same way. Just on a scale that's hard to picture.

It was trained on an enormous amount of text. Websites. Books. Articles. Forums. From all of that, it learned patterns. Which ideas tend to appear together. What an answer to a question usually looks like. How a sentence about plumbing regulations typically reads, or a page about Spanish property law, or a blog post about marketing for small businesses.

Google's own introduction to large language models explains it this way: a language model estimates the probability of what comes next in a sequence of text. Every word in the answer is a calculated guess, not a retrieved fact. It produces what the answer is likely to look like, based on everything it has seen.

Most of the time, that's genuinely useful. But useful and correct are 2 different things. And AI can't always tell them apart.

The confident tone is the dangerous part

A friend who doesn't know something hesitates. They say "I think," or "I'm not sure, you'd want to check that." You know to take it with a pinch of salt.

AI produces the same smooth, assured tone whether it's absolutely right or completely wrong.

There's no hesitation. No visible uncertainty. No "actually, this might be different in Spain." Just clean, confident sentences that read like they came from someone who knows.

A business owner on the Costa Blanca told me she'd asked ChatGPT about the legal rules for displaying prices in her shop window. The answer came back immediately. Detailed. Confident. Fully correct, as far as she could tell.

It was based on UK law.

The AI didn't know where she was. It just produced what price display rules typically look like, and in the training data it had seen, most of those articles came from the UK. It sounded certain because that's how it always sounds.

She almost printed the answer and gave it to her solicitor.

The information has a shelf life

Every AI model was trained on data up to a certain point in time. After that date, it doesn't know what happened.

Ask it about something recent and it either admits it doesn't know, which is honest, or it fills the gap with something that sounds plausible, which is the problem. Ask it about local pricing, local regulations, or specific rules for businesses in Spain, and the training data it has to draw from is often thin, outdated, or based on other countries entirely.

It still answers. Confidently. In full sentences.

The CSET research centre at Georgetown University describes this as the core of how language models work: they calculate what text is likely to come next, based on patterns in enormous amounts of data. The answer is always a prediction, not a verified fact.

That's a fundamental thing to understand before you trust AI with anything that matters.

Why this is different from a Google search

When you search something on Google, you get links to sources. You can see who wrote it, when, and where it came from. You can judge whether the source is credible.

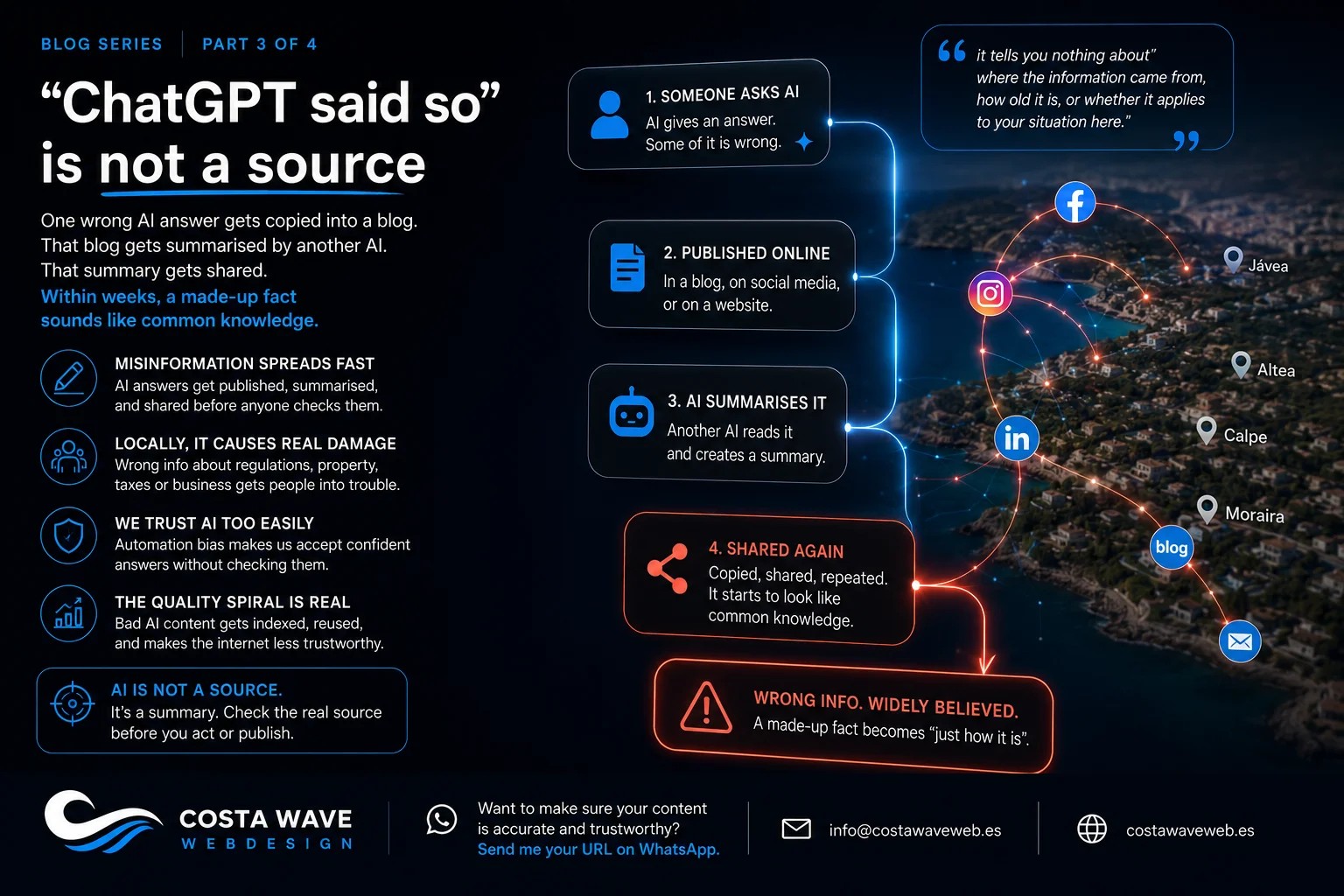

AI gives you a finished answer with no visible source. No date. No country of origin. No way to see where the information came from or how old it is.

It feels more efficient. And sometimes it is. But it removes the step where you would normally evaluate the source, and that step exists for a reason.

"AI said so" is never enough

This is the thing I see most often on the Costa Blanca.

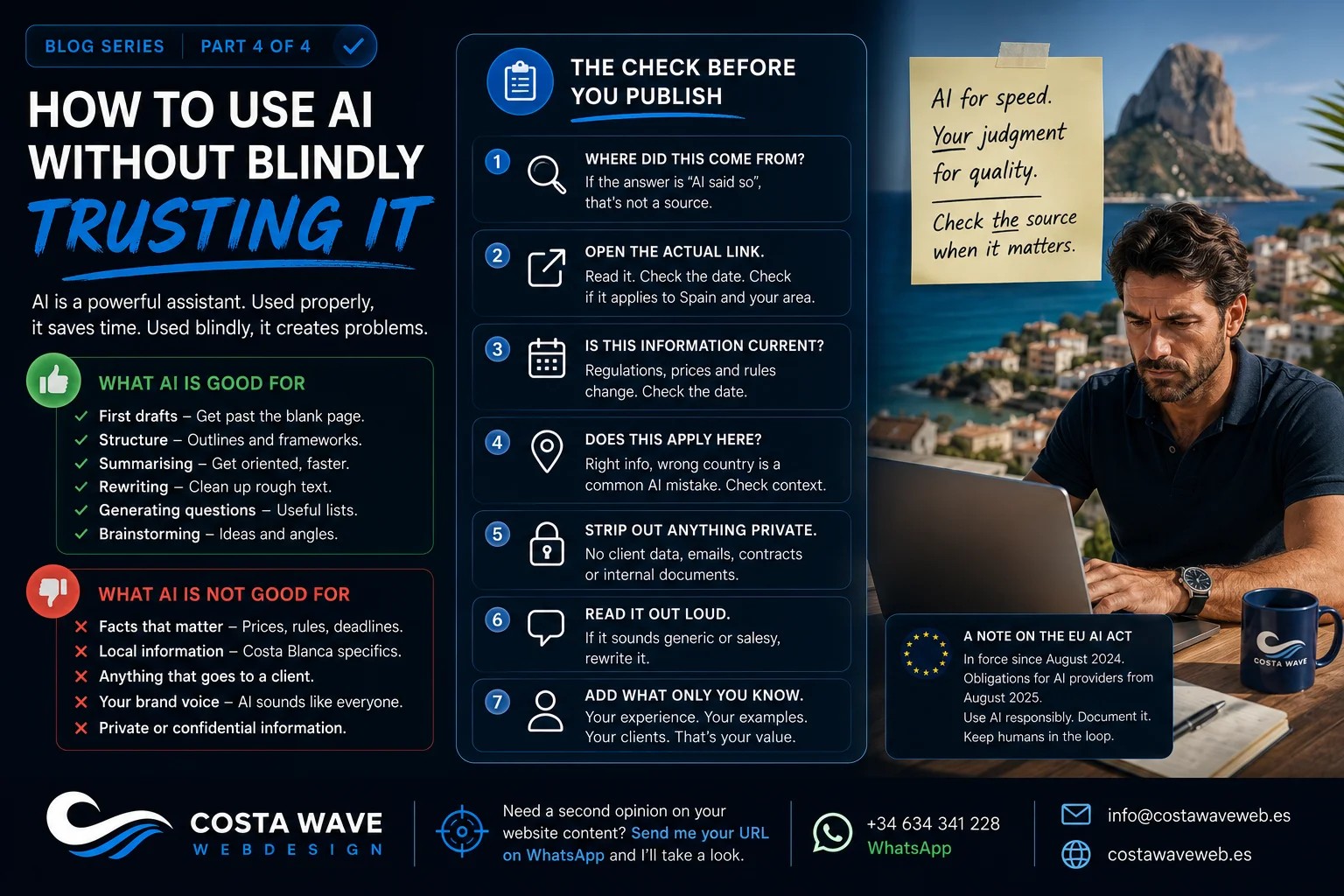

A business owner uses AI to write website content. Or to get advice on SEO. Or to check a regulation. And they treat the output as done. Finished. Ready to use.

AI can genuinely help with all of those things. I use it myself. But the output is a starting point, not a final answer.

The facts still need checking. The local details still need adding. Any claim that affects your business, your clients, or your legal position needs a real source.

Not the AI's summary of a source. The actual source, opened, read, and checked for date and country.

The next article in this series is about what happens when AI doesn't have the answer but gives you one anyway. It's called hallucination, and it's more common than most people realise.

Read part 2: AI doesn't lie. It fills gaps.

If you want to know whether your website content was built on solid foundations or on AI guesswork, send me your URL on WhatsApp and I'll take a look.

Read more:

- Should you use AI to write your website content?

- Why an AI-built website won't bring in clients

- What is GEO? Why your Costa Blanca business needs to care

- Local SEO for the Costa Blanca: what actually works in 2026

Stay updated

Sign up and get notified when a new article is published.