3/4 - "ChatGPT said so" is not a source | Costa Blanca

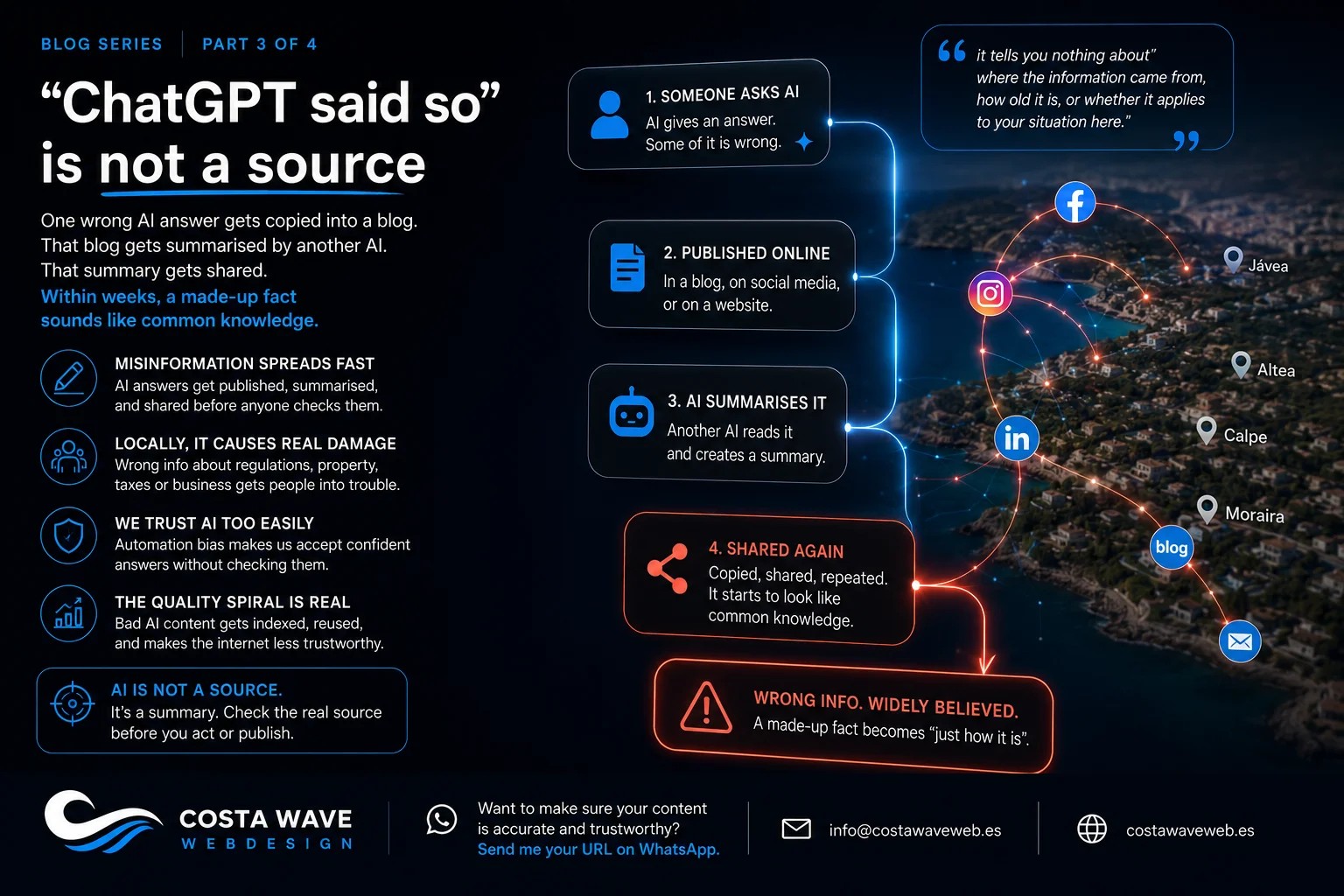

One wrong AI answer gets copied into a blog. That blog gets summarised by another AI. That summary gets shared. Within weeks, a made-up fact sounds like common knowledge. Here's how that works.

"ChatGPT said so" is not a source

Something has changed in how people share information.

A few years ago, someone might say "I read it on a website." Then "I saw it on Facebook." Now the phrase I hear constantly, from business owners in Calpe, from estate agents in Jávea, from people starting new businesses in Altea and Moraira, is this:

"ChatGPT said so."

Said it as if that settles it.

How misinformation travels

Here's how it works in practice.

Someone asks an AI tool a question. The AI produces an answer. Some of that answer is accurate. Some of it is filled in from patterns, as we covered in the previous article. The person doesn't check. They publish it: in a blog post, in a newsletter, in a Facebook group, in a website page.

Someone else reads that content. They ask an AI to summarise it. That AI produces a new version, now based partly on the original wrong information. That version gets shared too.

Within a few weeks, the original mistake has been copied, summarised, and repeated often enough that it starts to look like common knowledge. A statistic that was invented. A regulation that doesn't apply here. A price that was based on another country.

The Stanford Cyber Policy Center has documented how AI-generated content lowers the cost of producing misinformation at scale. Content that previously took teams of people to create can now be generated in seconds, and once it's published it gets indexed, summarised, and shared before anyone checks it.

The local version of this problem

On the Costa Blanca, this plays out in a very specific way.

Estate agents in Altea sharing AI-written descriptions of the buying process in Spain. Some of that information is accurate for a general European market. Some of it isn't accurate for Spain. Some of it isn't accurate for the Valencia region specifically.

Business owners in Calpe asking AI for advice on local licensing, then putting that advice on their website as if it's fact. Visitors in Benidorm writing AI-generated reviews. Tour operators in Jávea using AI to write content about local areas without anyone who actually knows those areas checking it first.

None of these people are trying to mislead anyone. They're using a tool that feels reliable because it sounds reliable. That's the problem.

When the wrong information is about somewhere general, the damage is limited. When it's about Calpe's building regulations, Moraira's rental licensing rules, or the specific process for buying a property in the Valencia region, someone reading it from Amsterdam and planning a move here is going to make decisions based on it.

The "automation bias" problem

There's a name for what happens when people trust AI output more than they should.

NIST, the US National Institute of Standards and Technology, calls it automation bias: the tendency to consider AI-generated content as better or more reliable than other sources, simply because a computer produced it.

NIST's AI Risk Management Framework documents this as one of the primary risks of AI systems in real-world use. People override their own judgment because the AI seemed so confident.

It's the same reason the lawyer in New York in the previous article didn't check the cases ChatGPT cited. They looked right. The AI was confident. The lawyer's instinct to verify got overridden by the plausibility of the output.

That happens at a small scale every day. A business owner in La Nucia reads an AI answer about VAT rules and stops there. A property buyer in Alfaz del Pi reads an AI-generated summary of the buying process and treats it as a guide. A new arrival in Denia asks AI for advice on setting up a business and follows it without checking with a gestor.

SEO and the quality spiral

There's a side effect that affects everyone who has a website on the Costa Blanca.

When large amounts of AI-generated content, some of it accurate, some of it not, get published across the internet, search engines start to index that content. AI systems then train on that content. Which means the next generation of AI models learns partly from content that was already generated by AI.

Google's documentation on helpful content specifically addresses this: content produced primarily to fill pages rather than to genuinely help people gets lower rankings. That applies to AI-generated content published without proper review.

The practical result for local businesses: if your competitors are filling their websites with unreviewed AI content and you're not, your site becomes relatively more trustworthy in Google's eyes. That's an advantage worth having.

But if your site also contains unreviewed AI content with wrong local details, wrong dates, or claims that can't be verified, it gets caught in the same downward spiral.

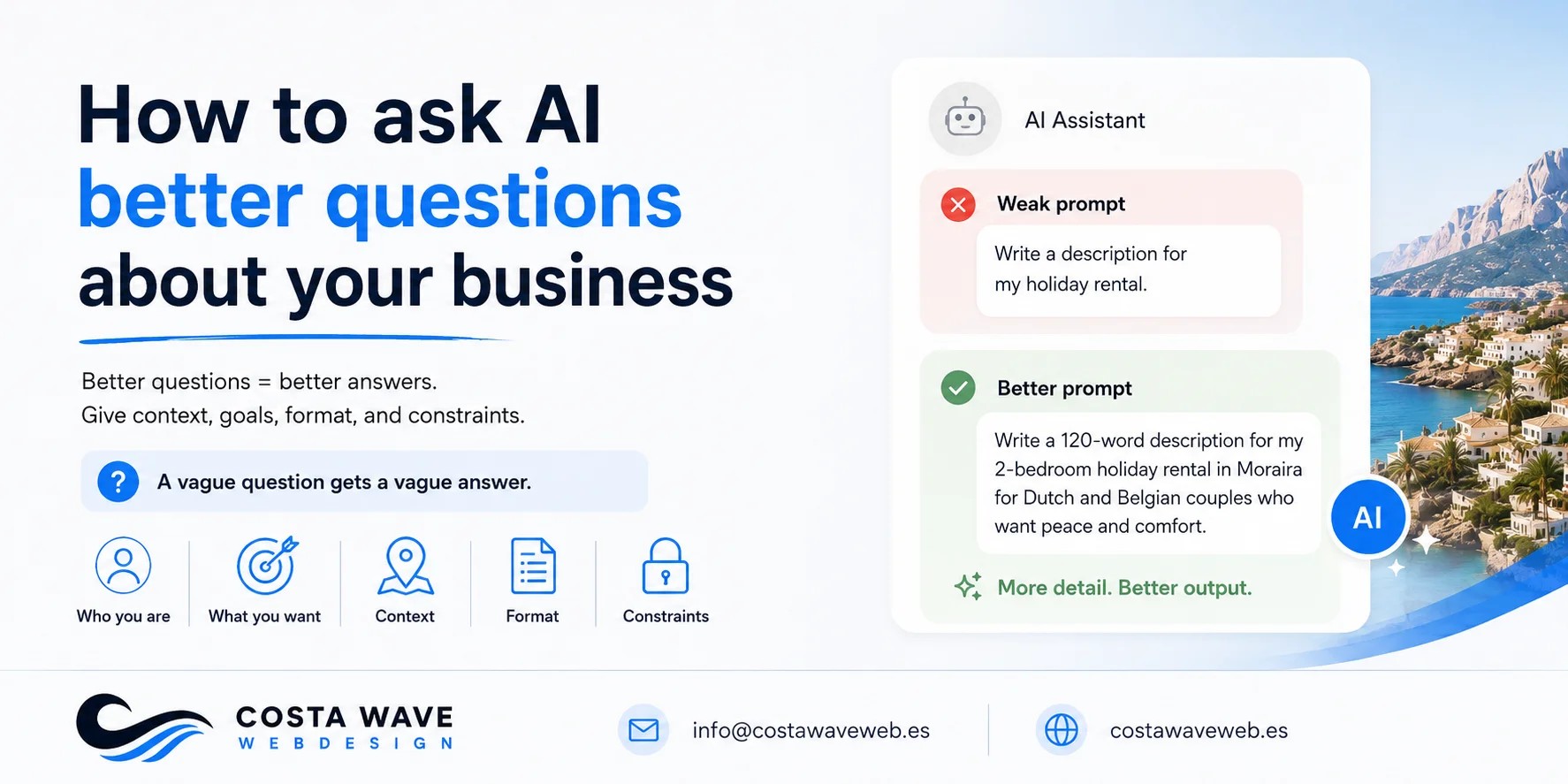

The difference between a source and a summary

This is the practical point that matters most.

AI is not a source. It's a summary of other sources, filtered through a model that sometimes fills gaps with invented content.

A real source has an author. A date. A location. A publication. Something you can point to and say: this is where this information came from, this is when it was written, this is who wrote it, and this is the country it applies to.

"ChatGPT said so" has none of that. It tells you nothing about where the information came from, how old it is, or whether it applies to your situation in Altea or Jávea or Calpe.

That doesn't mean AI output is useless. It means you need to follow it back to a real source before you act on it or publish it.

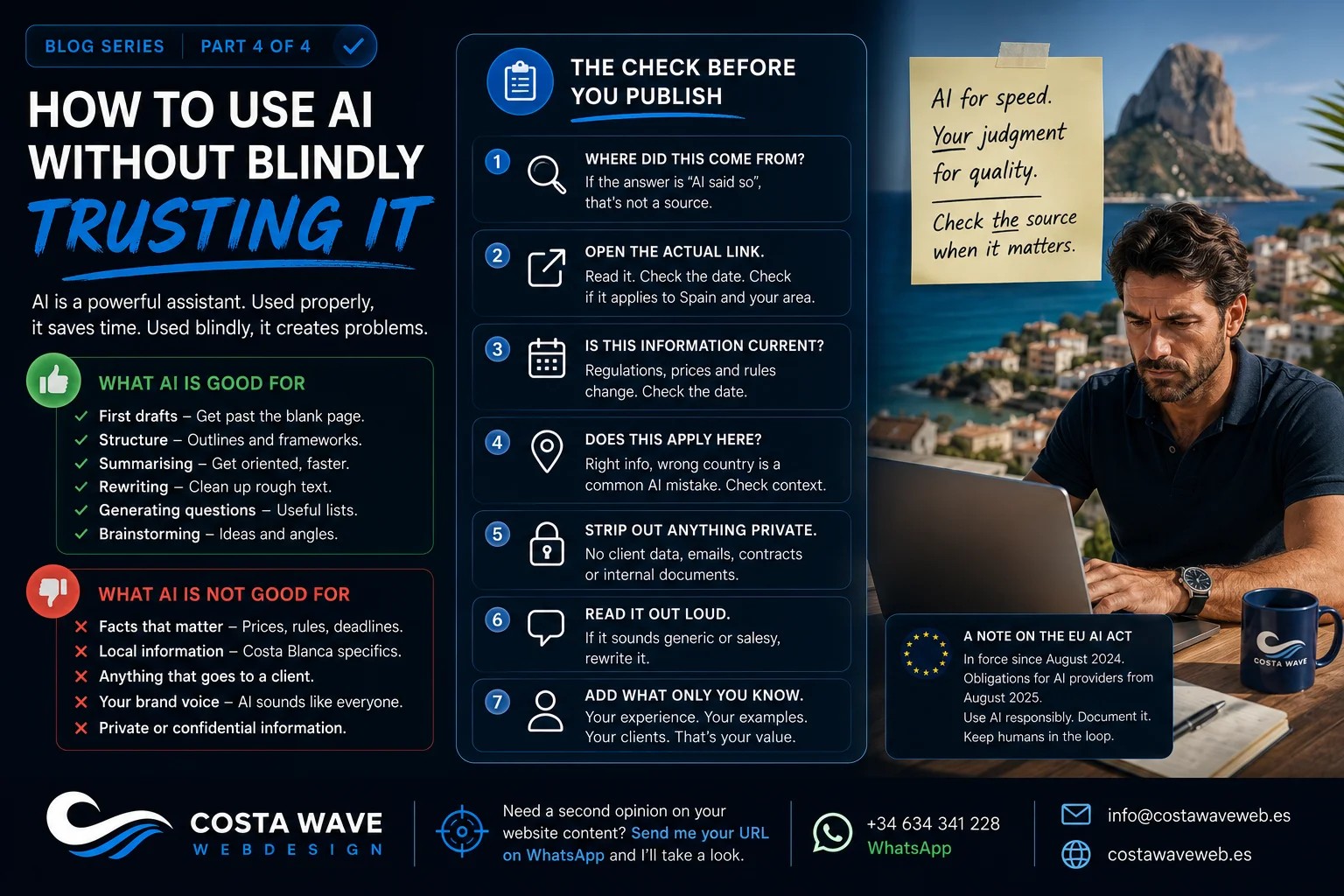

The next and final article in this series is about how to actually do that: how to use AI in a way that saves you time without letting it make decisions for you.

Read part 4: How to use AI without blindly trusting it.

If you want to know whether the content on your website is helping or hurting your reputation in local search, send me your URL on WhatsApp and I'll give you a straight answer.

Read more:

- Why AI sounds convincing, even when it's wrong

- AI doesn't lie. It fills gaps.

- Should you use AI to write your website content?

- What makes a good website? A practical guide for businesses on the Costa Blanca

Stay updated

Sign up and get notified when a new article is published.