2/4 - AI doesn't lie. It fills gaps. | Costa Blanca

AI doesn't know when it doesn't know something. It fills the gap with what sounds plausible. That's not a bug. It's how the technology works. Here's what that looks like in practice.

AI doesn't lie. It fills gaps.

There's a word that comes up a lot when people talk about AI getting things wrong.

Hallucination.

It sounds dramatic. Like the AI is seeing things. But what it actually means is much more ordinary, and much more dangerous for that.

What hallucination actually means

NIST, the US National Institute of Standards and Technology, defines it as confabulation: AI produces content that is confident, fluent, and wrong. Not randomly wrong. Plausibly wrong. Wrong in a way that fits the pattern of how a correct answer would read.

OpenAI has written about why this happens. Models can be trained in ways that reward producing an answer over admitting uncertainty. So when the model doesn't have reliable information, it doesn't always say so. It produces something that sounds like an answer.

The result is a tool that fills gaps the same way it fills everything else: smoothly, confidently, without flagging that anything is different.

What it looks like in practice

A lawyer in New York submitted a legal brief to a court in 2023. The brief cited several court cases as precedents.

The cases didn't exist.

ChatGPT had generated them. Real-sounding names, real-sounding case numbers, real-sounding summaries. The lawyer hadn't checked. The judge noticed. The lawyer was fined.

The case was covered by the New York Times and became one of the most widely reported examples of AI hallucination causing real damage.

That's an extreme case. But the same thing happens at a much smaller scale every day.

A business owner asks AI for the average price of web design in Spain. AI produces a number. It sounds specific. It might be based on UK pricing, 3-year-old data, or nothing in particular.

Someone asks for the rules around employing staff in Spain. AI gives a detailed answer. Confident. Structured. Based on a mix of general European labour law and whatever else it found relevant in the training data.

Someone asks for the opening hours of a specific business on the Costa Blanca. AI gives opening hours. They might be right. They might be from a listing that hasn't been updated in 2 years.

In each case, the answer reads the same whether it's accurate or invented.

Fake sources are a specific problem

AI doesn't just invent facts. It invents sources.

Ask it to back up a claim with a reference and it will sometimes produce a citation that looks entirely real: a plausible author name, a plausible journal, a plausible title, a plausible year. Click the link and the article doesn't exist.

This is particularly dangerous because a source feels like proof. You see a citation and your guard drops. You stop reading critically. That's the moment the invented information gets through.

The rule is simple: if a source matters, open it yourself. Don't trust the title. Don't trust the summary. Open the actual page, check the date, check the author, check whether it says what the AI claimed it says.

Why it happens more with local information

AI was trained on text from across the internet. A huge amount of that text is in English and covers the US, the UK, and Western Europe in general.

Local information, specific regulations for businesses in Spain, property law on the Costa Blanca, tax rules for self-employed workers in the Valencia region, is underrepresented. There's less of it in the training data. That means the model has less to go on and is more likely to fill gaps with general knowledge that may not apply locally.

This is the pattern I see most often: someone uses AI to research something specific to Spain or to the Costa Blanca, and the answer they get is technically about Europe, or worse, about a completely different country.

It reads correctly. The context is wrong.

The thing that makes this hard to spot

If AI said "I'm not sure about this" before every answer it was uncertain about, hallucination would be easy to manage.

It doesn't.

The uncertain answer and the confident answer look identical. The invented source and the real source look identical. The wrong country's regulations and the right country's regulations look identical.

You can't tell from the text itself. You have to check.

That's not a criticism of AI as a tool. It's just the reality of how it works right now. And knowing it changes how you should use it.

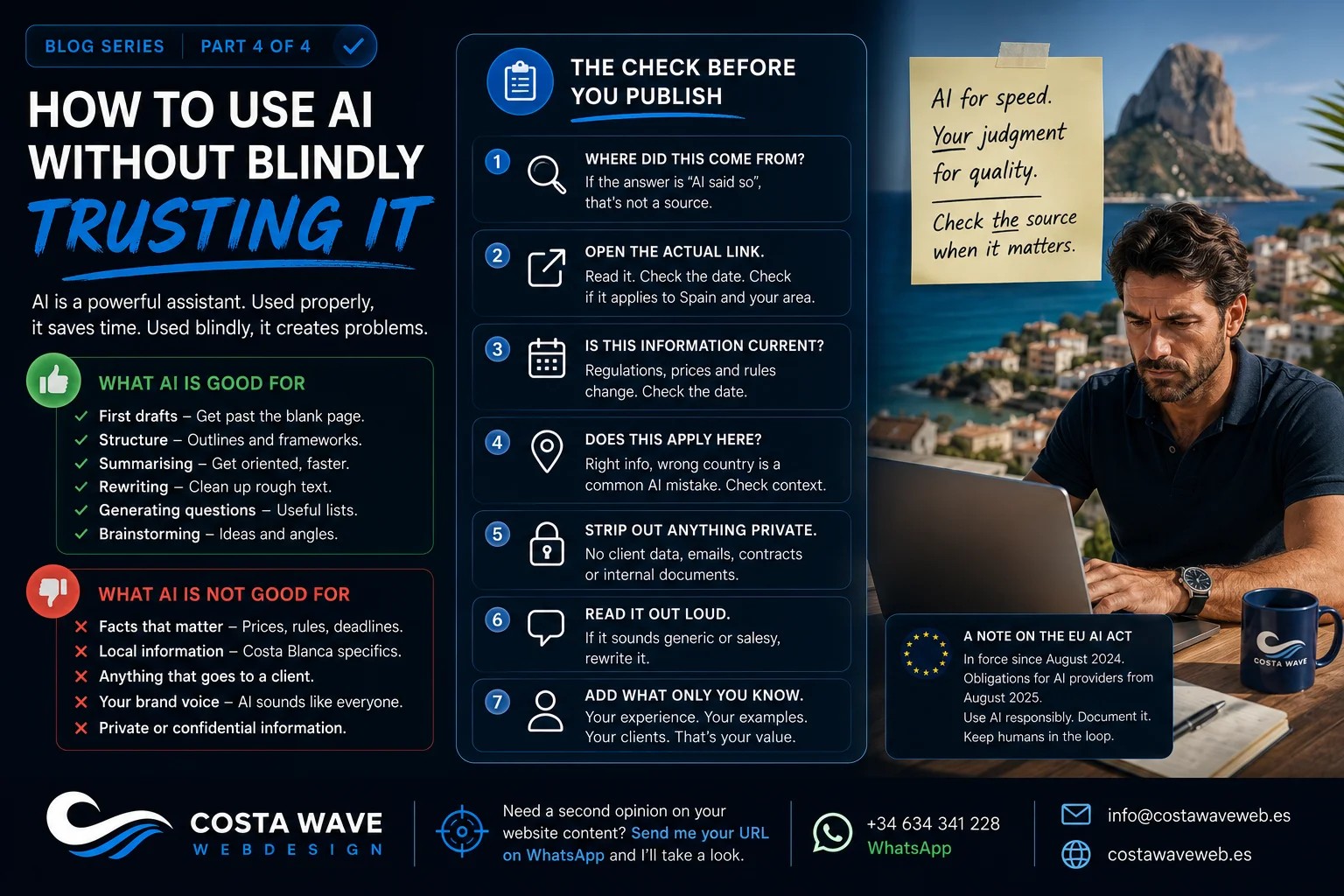

What to do about it

Check anything that matters. That means:

Pricing: look at actual current sources for Spain specifically. Legal and tax rules: check with a professional or a verified Spanish government source. Sources and citations: open every link before you trust it. Local business information: check directly with the business. Statistics: find the original study, not the AI summary.

AI is still useful for structure, for drafts, for generating ideas, for rewriting rough text. For those things, the exact accuracy of every claim matters less.

But for anything that goes on your website, into a contract, into advice you give to a client, or into a decision that costs money: check it independently.

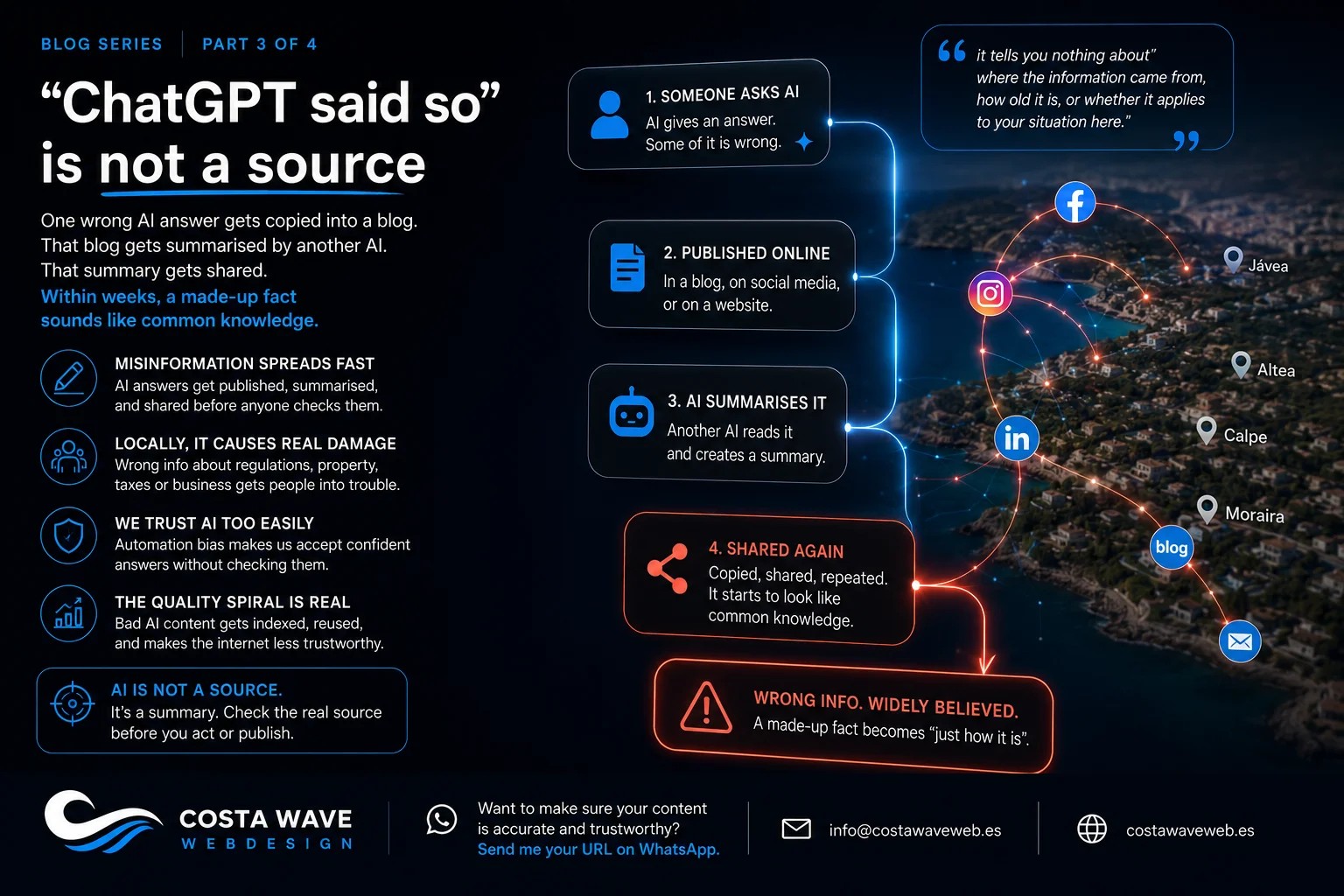

The next article is about what happens when AI-generated misinformation doesn't stay in one place. How it spreads, how it gets amplified, and why "ChatGPT said so" is a phrase that should make you more cautious, not less.

Read part 3: "ChatGPT said so" is not a source.

If you want to know whether the content on your website can be trusted, send me your URL on WhatsApp and I'll take a look.

Read more:

- Why AI sounds convincing, even when it's wrong

- Should you use AI to write your website content?

- Why an AI-built website won't bring in clients

- Local SEO for the Costa Blanca: what actually works in 2026

Stay updated

Sign up and get notified when a new article is published.